The AI Layoff Trap: When Local Efficiency Becomes Systemic Fragility

On paper, the AI layoff case looks clean. If a business can automate part of its support queue, reduce its content team, ship the same backlog with fewer engineers, or replace a layer of operations work with agenic models and workflows, the wage bill falls. If revenue holds, margins improve. If the market rewards near‑term efficiency, management looks rational.

This logic is not fake. It is just incomplete.

Workers are not only a cost line. They are also customers, taxpayers, future hires, domain experts, and the source of a lot of organisational learning that does not show up neatly in a quarterly spreadsheet. Once many firms make the same local optimisation at the same time, the system they were optimising against starts changing beneath them.

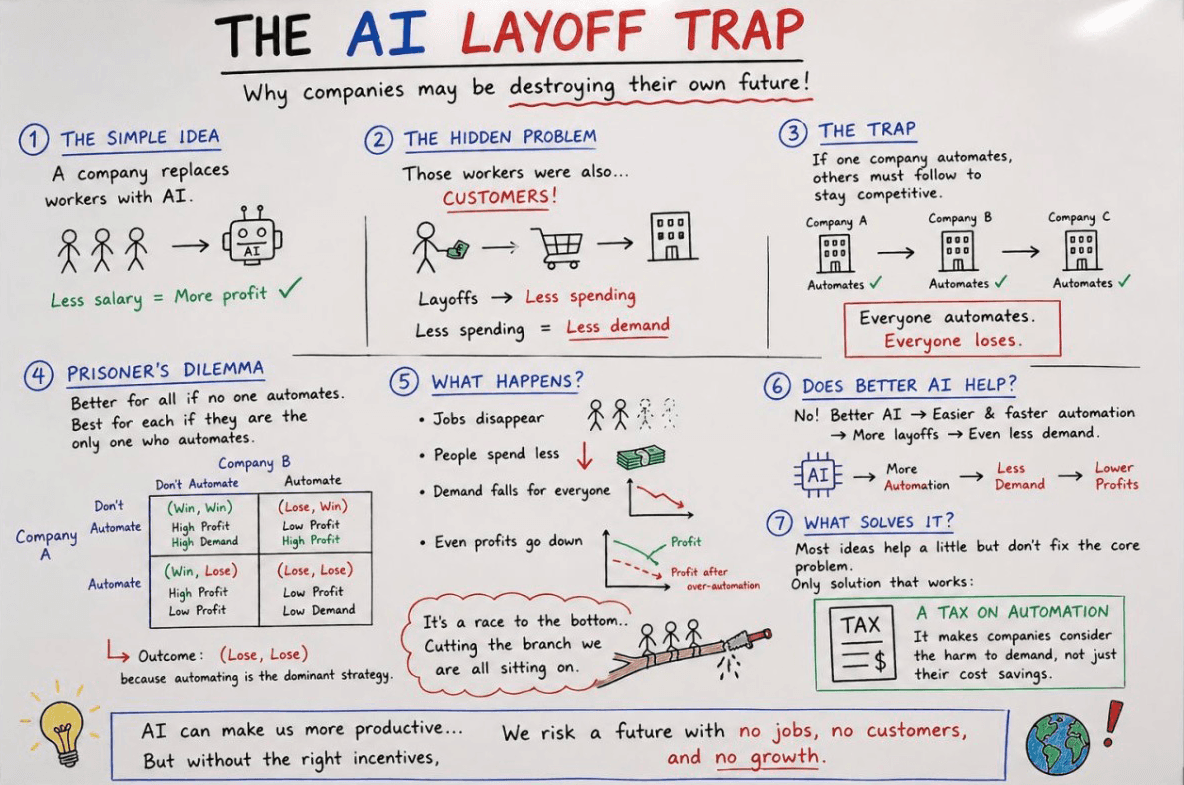

That is the AI layoff trap.

For engineering and technology leaders, this is not just a labour‑market question. It is a systems‑design problem: local optimisation, feedback loops, hidden dependencies, brittle capacity, and badly scoped metrics.

AI automation is not the trap. The trap is mass labour displacement without mechanisms to preserve purchasing power, distribute productivity gains, maintain organisational learning, and prevent the gains from concentrating among a small number of firms and asset owners.

The evidence does not support a simple binary. The IMF's January 2024 overview of AI and the global economy frames AI exposure as both substitution and complementarity. A large share of work is exposed, especially in advanced economies, but exposure is not the same as replacement. Some jobs become more productive. Others face lower labour demand, weaker wage pressure, or slower hiring. That split matters much more than the all‑too‑familiar "AI takes jobs" headline.

The Simple Company‑Level Calculation

For an individual firm, the case for aggressive automation can look quite sensible.

If generative AI reduces the time needed for routine coding, first‑draft copy, customer support responses, QA triage, campaign operations, or internal reporting, then management sees three immediate options. It can grow output with the same team. It can keep output flat and cut costs. Or it can do both selectively, increasing pressure on every team that still looks labour‑heavy.

The incentive becomes stronger when adoption stops looking experimental. NBER's working paper on the rapid adoption of generative AI found that, by late 2024, 23 percent of employed respondents in the United States had used generative AI for work in the previous week. A later published version put the figure at 27 percent, and that is growth that has not slowed since.

Once a tool becomes normal inside work rather than experimental at the edge, the board‑level question changes from "should we use it?" to "why has this not changed the cost base yet?"

That pressure is strongest when executive decisions are made relative to peers rather than in isolation. If peers are presenting AI savings to boards, nobody wants to be the team defending "unnecessary" headcount. The World Economic Forum's Future of Jobs 2025 work captures that tension directly: 77 percent of employers expect to provide AI training, whilst 41 percent expect to reduce their workforce in roles exposed to AI‑induced skills obsolescence.

That is the local calculation. Use the tool, remove cost, defend margin, do not be the slow mover.

The trap begins when every firm treats that narrow logic as the whole story.

The Hidden Demand Problem

The macroeconomic problem is not especially mysterious. Most consumer demand still depends, directly or indirectly, on people having income. When wages are compressed, hiring slows, or large groups of workers are pushed into weaker bargaining positions, the customer base weakens too.

That does not mean every automated task destroys demand. It means the system only works well when productivity gains are translated into one or more of the following:

- lower prices that actually reach customers

- higher wages or profit‑sharing for workers

- shorter working time without equivalent pay loss

- new tasks and new jobs arriving fast enough to replace lost income

- public redistribution strong enough to stop demand collapsing

Without those mechanisms, the savings one firm books as efficiency gains can reappear elsewhere as weaker sales, weaker tax receipts, or a more fragile labour market.

This is also where people often overcorrect into a bad argument of their own. The problem is not a crude "there is a fixed amount of work" story. New technology can create new tasks, new products, and new industries. Acemoglu and Restrepo's task‑based model is still one of the clearest ways to describe the mechanism. Automation creates a displacement effect, while new tasks can create a reinstatement effect that raises labour demand again.

The difficulty is that the second effect is not automatic, immediate, or evenly distributed.

New tasks may arrive in different sectors, different geographies, and at different wage levels. They may require skills the displaced workforce does not yet have. They may benefit a narrower labour pool. Or they may be captured mostly as capital income rather than labour income. That is why "technology creates new jobs" is directionally true in the long run but often useless as a management excuse in the short run.

Why This is Not Automatic Doom

It is worth being precise here, because the anti‑AI version of this argument is just as weak as the booster version.

If AI helps firms produce better goods and services more cheaply, and if competition passes enough of that gain through to customers, living standards can rise. If workers use AI to become more productive and keep a meaningful share of the upside, real incomes can rise. If adoption is paired with retraining, redeployment, and new business formation, the demand side can recover or even strengthen.

There is already evidence for useful augmentation. Anthropic's first Economic Index report found that observed Claude usage leaned slightly more toward augmentation than automation (57 percent versus 43 percent), with usage concentrated in software development and technical writing tasks.

Specific deployments can also produce meaningful gains. In the customer support setting studied in NBER's "Generative AI at Work", access to a generative AI assistant increased productivity by 14 percent on average, with a 34 percent improvement for novice and low‑skilled workers, alongside better customer sentiment and retention.

So the real question is not whether AI can create value. It plainly can.

The real question is who captures that value, how fast displacement happens relative to adaptation, and whether the gains circulate back into the wider economy or pool at the top of a narrow ownership stack.

The Prisoner's Dilemma of AI Automation

This is why the problem behaves like a prisoner's dilemma.

If no firm automates aggressively for headcount reduction, nobody gets an immediate labour‑cost advantage, but demand and capability remain broadly stable.

If one firm automates faster than peers, it may improve margin first, signal modernisation first, and potentially cut price first.

If every firm follows that same logic, each one may improve its own ratio while jointly weakening the demand base, the talent pipeline, and the institutional resilience the whole market depends on.

That tension is not theoretical. Firms talk about upskilling and redeployment because they know they still need capability. They also talk about workforce reduction because the short‑term arithmetic is attractive. Both impulses are real. That is exactly why the trap exists.

The key point is not that universal automation always becomes lose‑lose. Sometimes lower costs, better tools, and new categories more than compensate. The point is that private incentives do not reliably produce that outcome on their own. They often produce a race in which every firm feels compelled to cut faster than the system can safely absorb.

Why Better AI Does Not Automatically Solve the Problem

A common mistake is to assume that better models solve the coordination problem by brute force. They do not. Better models usually make the coordination problem more urgent.

If capability improves, automation gets cheaper, faster, and more general. More workflows become worth redesigning. More labour categories become exposed. More boards ask harder questions about why a team still exists in its current shape.

One useful lens comes from METR's work on task length. It estimates that the length of tasks frontier systems can complete with 50% reliability has been doubling roughly every seven months, while also stressing that translating capability growth into real‑world economic impact is messy.

That means capability acceleration can increase productive capacity far faster than institutions adapt. Training systems, labour law, corporate governance, competition policy, education, and internal operating models do not move on seven‑month cycles.

So no, better AI does not remove the trap. Under the wrong incentives, it makes the trap easier to enter, because the local case for substitution becomes stronger before the system‑level safeguards are ready.

The Engineering Leadership Problem

This becomes very concrete once you move from macroeconomics into delivery teams. It is also why the blunt "Will AI Replace Front‑End Developers?" framing keeps missing the hardest part. Code generation is only one small part of the job.

There is a big difference between using AI to augment a team and using AI to hollow it out.

Augmentation means the team keeps its judgement, context, and coverage, but removes routine friction. Engineers use AI for scaffolding, code search, draft tests, migration helpers, incident note synthesis, or exploratory spikes. Support teams use it for suggested replies and case triage. Product teams use it to cluster research notes faster. Editorial teams use it to accelerate first drafts while keeping real review and subject matter ownership.

Hollowing out is different. That is when leadership treats those gains as proof that fewer people are needed across the board, before asking what those people were really carrying.

Senior engineers do not only write code. They hold architecture history, review standards, failure modes, operational instincts, domain models, incident memory, mentoring capacity, and the judgement to know when the obvious answer is wrong. Remove too much of that too quickly and the organisation can look more efficient right up until the first ugly migration, production incident, regulatory change, or cross‑system redesign.

A software platform team can look leaner on paper after automating ticket throughput, test generation, and delivery reporting, while quietly losing the people who understand why the checkout flow, membership platform, CMS model, pricing logic, and analytics events behave the way they do. The damage rarely appears in the first sprint. It shows up during the migration, the incident, the compliance change, or the market‑specific rollout where the hidden assumptions finally matter.

That is also why AI's impact on developers is better understood as a shift in accountability than a simple replacement story. The tools can accelerate parts of the workflow without reducing the need for experienced judgement at the edges.

The evidence on software productivity is already mixed enough to justify caution. Some deployments show strong gains in narrow support workflows. But METR's early‑2025 study of experienced open‑source developers found that, in that very specific context, developers using AI tools took 19 percent longer on average, even though they expected a speed‑up. METR has since reported later experimental results showing some evidence of speed‑up, with wide confidence intervals and selection effects. That makes the broader point stronger rather than weaker: AI productivity effects are highly sensitive to task type, tooling, context, and measurement design. The important lesson is not "AI makes engineers slower". The lesson is that high‑context work in familiar, messy systems does not behave like benchmark demos.

The same pattern appears outside engineering.

- In customer service, an assistant can raise throughput, but cutting too deeply can remove escalation knowledge, coaching bandwidth, and the human signals that reveal product problems.

- In marketing operations, AI can produce more assets per week, but if editorial judgement disappears the result may be more volume and less persuasion. That is close to the problem in GEO vs. SEO: more output does not fix weak information architecture, thin expertise signals, or vague claims.

- In product discovery, models can summarise calls and cluster notes, but replacing researchers and product thinkers reduces the chance of seeing the awkward, surprising signal that changes the roadmap.

- On digital platforms, AI can automate seller or user support, but over‑optimising for ticket deflection can quietly make onboarding colder, slower, and more error‑prone.

That is what brittleness looks like. It does not announce itself as brittleness at first. It announces itself as efficiency.

The Productivity Distribution Problem

Even when AI does create genuine productivity gains, leadership still needs to ask where those gains go.

Do they go to employees as better pay, better tools, less drudge work, or shorter working weeks? Do they go to customers as lower prices and better service? Do they stay inside the firm as stronger margins? Do they flow onward to shareholders? Or do they leak straight through to model vendors, cloud providers, and the owners of scarce compute, distribution, and data assets?

This matters because some reported "productivity" is not new value creation in the broad economic sense. Sometimes it is margin transfer.

Concentration is part of the distribution problem too. In April 2024 the CMA warned that a small number of incumbent technology firms held strong positions both in the development of foundation models and in the deployment layer, via critical inputs like compute, data, and talent, plus routes to market such as apps and platforms. It also warned that these firms may have both the ability and incentive to shape the market in their own interests.

That should matter to any CTO or transformation lead. If your firm "saves" money by reducing labour while becoming structurally dependent on a small number of external AI and cloud suppliers, some of the gain may be much less durable than it looks. You may simply be swapping employee cost for platform dependence.

The broader automation literature is relevant here too. Acemoglu and Restrepo's 2024 NBER paper on automation and rent dissipation argues that automation can target high‑rent tasks, amplify wage losses, and reduce or even negate a large share of the productivity gain once distributional distortions are accounted for.

Again, the warning is not "never automate". It is that badly distributed automation can look efficient at firm level while being wasteful at system level.

The Measurement Problem

This is where a lot of leadership teams make things worse for themselves. They measure what is easy, not what matters.

Headcount reduction is easy to measure. Ticket deflection is easy to measure. Cost per task, output volume, prompt count, cycle time, and story throughput are all easy to measure.

None of those tells you whether the organisation just got healthier.

If you are a CTO, VP of Engineering, Head of Product, or transformation lead, the better measures are less flattering and more useful:

- revenue resilience, not just cost removal

- customer satisfaction and resolution quality, not just ticket avoidance

- defect rates, rework, and incident frequency, not just delivery speed

- onboarding time for new hires, not just output from current experts

- knowledge retention, not just apparent documentation coverage

- mean time to understand a failure, not just mean time to close a task

- maintenance cost six months later, not just sprint velocity now

- employee capability growth, not just tool adoption

- supplier dependence, not just platform convenience

The broader datasets are still much messier than a lot of boardroom AI rhetoric suggests. The OECD's Employment Outlook 2023 notes that AI has so far affected job quality more than job quantity in many cases, that adoption is currently more common in larger and more capital‑intensive firms, and that after adjustment, the literature has so far found only modest productivity gains in the broader data, even while case studies for specific uses can show much larger effects.

That is a polite way of saying many firms may still be mistaking local process change for durable productivity.

What Better Incentives Could Look Like

This is the point where people usually reach for a slogan like "tax the robots". The instinct is understandable, but the implementation is much messier than it sounds.

A crude tax on automation is hard to define. Is a better internal script automation? Is a spreadsheet macro? Is a new search tool? Is a model‑assisted workflow that keeps the same team but changes the task mix? Taxing all of that indiscriminately would punish useful productivity improvements and likely entrench incumbents who can absorb compliance more easily.

The better question is where the externality actually sits.

More promising levers include:

- profit‑sharing, so productivity gains feed back into purchasing power

- employee ownership, so the upside is not captured only by capital

- shorter working weeks when output rises sustainably, rather than treating labour savings as redundancy by default

- serious retraining and redeployment budgets, not symbolic learning portals

- internal mobility programmes that move exposed workers into adjacent higher‑value work

- stronger competition policy, especially where AI markets are bottlenecked by compute, distribution, or platform control

- taxation aimed at excess rents and extreme concentration rather than useful tooling itself

- public investment in training, digital infrastructure, and transition support

- stronger social safety nets, because transition risk that is individually catastrophic becomes politically destabilising very quickly

- board‑level AI governance that treats workforce effects, concentration, and resilience as strategic issues rather than as HR clean‑up after the decision

That is also why the WEF numbers on upskilling and redeployment matter. The firms that are at least trying to transition people internally are not only being nicer. They are often protecting scarce capability and future demand at the same time.

What Responsible AI Automation Looks Like Inside a Company

The practical version of this is not complicated, but it does require discipline.

Start by classifying the proposed change correctly:

- Augmentation: the person still owns the task, but AI removes friction.

- Acceleration: the task remains human‑led, but throughput rises materially.

- Substitution: AI now performs a defined share of work that people previously did.

- Elimination: the role, task family, or team disappears rather than changing shape.

Those are not the same decision.

Before approving an automation programme, leadership should ask:

- What task is being changed, not just what job title is being targeted?

- Is the gain real user value, or only internal output volume?

- What happens to the displaced work when the edge cases, escalations, and exceptions arrive?

- What happens to the displaced workers, and how much adjacent work could they plausibly absorb?

- Are we removing drudge work, or are we removing judgement, mentorship, and resilience capacity?

- What customer demand assumptions are baked into the business case?

- If every major competitor made the same move, would the resulting market still support the revenue line in this model?

- Who captures the savings over a three‑year horizon: customers, staff, the firm, or upstream vendors?

- Which metrics would tell us we have made the system more brittle, and who is accountable for watching them?

If the answers stop at "fewer people, same output", the proposal is probably too shallow.

Wrapping up

AI can make firms more productive. That part is real.

What is not real is the assumption that local labour substitution automatically becomes broad prosperity. Sometimes it can. Sometimes it does the opposite. The difference sits in incentives, distribution, competition, organisational design, and whether productivity gains are allowed to circulate back into demand and human capability rather than being extracted as a narrow private win.

That is why the AI layoff trap is not mainly a story about technology. It is a story about governance.

If firms use AI to remove waste, strengthen teams, lower prices, open new categories, and share the upside, the economy can adapt. If they use it mainly to cut labour before purchasing power, skills, and organisational resilience can recover, they have not automated their way into resilience. They have automated away part of the demand, judgement, and institutional memory that their future growth depended upon.